Episode 58 — Lightning Recap of Core Controls and Must-Knows.

In this episode, we’re going to do a lightning recap, but not the kind that rushes past ideas so fast they blur together. The point of a fast recap, especially for someone new, is to re-stitch the whole picture so you remember how the core controls connect and why they exist in the first place. In PCI work, individual requirements can feel like separate boxes, and that can make studying feel like memorizing a long checklist instead of understanding a living system. A better approach is to treat each control as part of a safety net around the Cardholder Data Environment (C D E), where one control catches mistakes that slip past another control. That net matters because payment environments are busy, and busy environments produce drift, shortcuts, and blind spots unless the controls are designed to resist normal human behavior. As we move through these must-knows, listen for the repeated themes: reduce exposure, control access, detect change, and prove what you did with evidence. Those themes are what make the details make sense.

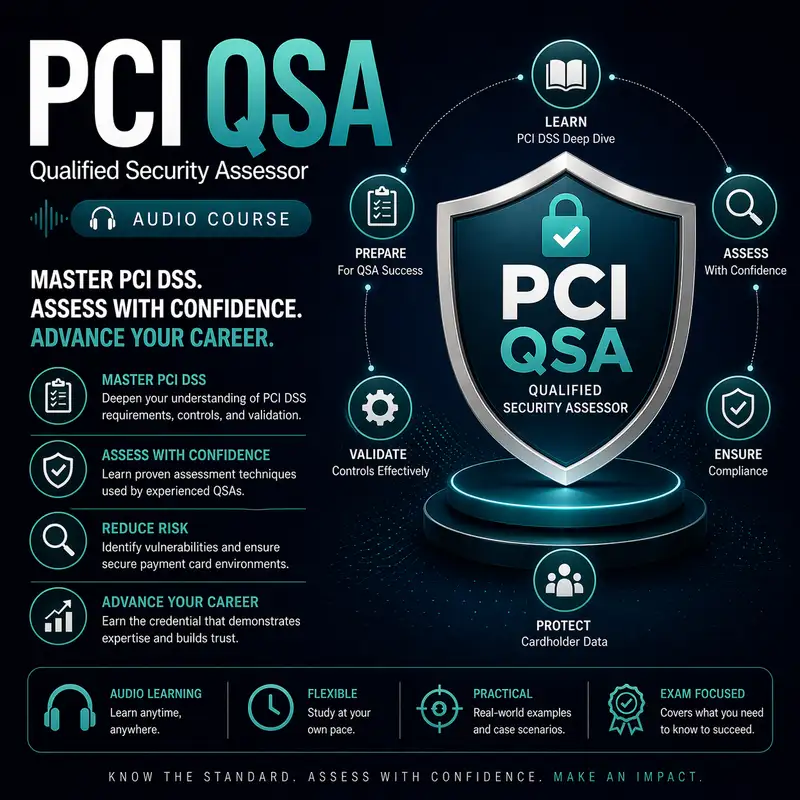

Before we continue, a quick note: this audio course is a companion to our course companion books. The first book is about the exam and provides detailed information on how to pass it best. The second book is a Kindle-only eBook that contains 1,000 flashcards that can be used on your mobile device or Kindle. Check them both out at Cyber Author dot me, in the Bare Metal Study Guides Series.

The first must-know is that scoping is not a paperwork step, because scope determines what you must protect, what you must test, and what you must prove. In a payment environment, scope follows data, especially the Primary Account Number (P A N), and it also follows influence, meaning systems that can change, observe, or weaken the security of the payment flow can become part of the story. Beginners often assume that only systems that store the P A N matter, but systems that route traffic, manage identities, deploy code, or hold secrets can matter just as much because they can reshape the environment overnight. Scoping becomes reliable when you can describe the payment flow in plain language, point to where sensitive data is processed or transmitted, and then explain what boundaries keep unrelated systems from affecting that flow. It also becomes reliable when you can demonstrate that boundaries are real through evidence, not just diagrams. If you remember only one thing about scope, remember that unclear scope is the root of most assessment confusion.

Another must-know is segmentation, but not as a buzzword, as a discipline of controlling reachability. Segmentation matters because it is how organizations keep the sensitive zone small, so fewer systems must meet the highest bar of controls. The key idea is that segmentation is proven by what can talk to what, not by what is labeled on a network diagram. A segment can look separated in theory but still be reachable in practice through misconfigured rules, shared services, or administrative pathways. That is why validation is about testing paths and understanding how changes could open paths, especially when environments are dynamic. Beginners sometimes think segmentation is an all-or-nothing feature, but the reality is that segmentation is a set of enforced decisions about which connections are allowed and which are blocked, and those decisions must be maintained over time. Good segmentation shrinks scope, reduces attack paths, and makes monitoring more meaningful because fewer routes exist. Weak segmentation creates hidden bridges into the C D E that can undermine many other controls.

Access control is another core must-know, and the most important part is understanding that access is a lifecycle, not a single login event. Access begins with identity creation, continues through privilege assignment, and ends with removal, and every step can introduce risk if it is inconsistent. A payment environment becomes fragile when accounts are shared, privileges are broad by default, or reviews are treated as optional. The principle of least privilege is not a slogan; it is the practical method of making it harder for mistakes and attackers to have large impact. When you think like an assessor, you want to see that access is tied to individuals, that elevated access is constrained, and that the organization can show routine review and offboarding activity. Beginners often overlook that service accounts and vendor accounts can be the most dangerous because they are long-lived and widely trusted. Strong access controls limit who can change the environment, who can see sensitive data, and who can disable logging, which protects every other control in the net.

Logging and monitoring are must-knows because they are the environment’s memory, and you cannot defend what you cannot see. In PCI contexts, it is not enough that logs exist somewhere; the organization must be able to demonstrate that logs are collected, protected, retained, and reviewed in a way that supports detection and investigation. The misconception beginners often have is that logging is purely technical, like a switch you turn on, but the real value comes from the human process that turns log signals into action. Monitoring means noticing unusual behavior, and review means making that noticing routine, not occasional. It also means having the ability to connect events across systems, which depends on consistent time, consistent identity attribution, and consistent capture of key events. When logging is weak, incidents become stories told from fragments and guesses, and those guesses lead to slow response and incorrect conclusions. Strong logging makes it possible to say what happened, when it happened, and who did it, which is the basis of accountability.

Time synchronization is a must-know because it is what makes logs and evidence coherent instead of contradictory. When systems disagree about time, the event timeline becomes unreliable, and that damages detection, investigation, and even basic troubleshooting. The key concept is that time should come from a trusted reference and be distributed consistently so that drift is detected and corrected. Beginners sometimes assume a few minutes of drift does not matter, but it matters when automated systems act in seconds and when multiple systems are involved in one transaction. Reliable time also supports other controls like certificate validation and session management, where time-based decisions can break or behave unpredictably if clocks are wrong. From a validation perspective, synchronized time is one of those foundational proofs that makes other proofs more credible, because it shows the environment can maintain operational consistency. A reliable timeline is how you demonstrate that alerts occurred before actions, that reviews occurred when they should, and that changes align with approved windows. Without reliable time, the environment’s evidence story becomes shaky.

Change control is a must-know because change is the natural enemy of stable security controls. Payment environments must evolve, and updates are necessary for security, but unmanaged change creates drift, surprises, and gaps that attackers exploit. A mature change and release pipeline is not about slowing everything down; it is about keeping changes traceable, tested, and reversible. Beginners sometimes imagine change control as a form, but it is better understood as a set of gates: review, approval, testing, deployment, and post-change validation. Those gates reduce mistakes and prevent one person from unilaterally altering sensitive systems without oversight. Emergency change is where discipline matters most, because urgency pushes people toward shortcuts, and shortcuts often become permanent exceptions if they are not revisited. In PCI work, change control also protects scope, because a small change can accidentally introduce new data storage, new connectivity, or new scripts that expand exposure. Strong change control is what keeps yesterday’s secure design from becoming today’s uncontrolled reality.

File integrity monitoring is a must-know because it catches drift in the places where drift can be most dangerous: the files and settings that define how systems behave. A system can still appear to work while its integrity is compromised, especially if a malicious actor modified a script, a configuration, or a binary in a subtle way. The most important beginner takeaway is that integrity monitoring is not about watching everything; it is about watching the right things and reacting when the baseline is violated. A baseline is the approved known state, and drift is the unapproved movement away from that state. The reason this matters in payment environments is that small changes can create big effects, like weakening authentication, disabling logging, or injecting malicious behavior into a payment flow. Integrity monitoring only helps if it is linked to a response process, because alerts that nobody reviews become noise and eventually get ignored. When integrity monitoring is tuned well and acted on consistently, it becomes an early warning system that reduces the time an attacker can remain hidden and reduces the chance that accidental changes become permanent risk.

Wireless and remote access are must-knows because they are classic weak-link zones where convenience can override discipline. Wireless extends the network beyond physical walls, and remote access extends privileged connectivity beyond the organization’s direct control, so both require stronger identity, tighter authorization, and clearer monitoring. Beginners sometimes assume the risk is only the connection method, but the more important risk is what becomes reachable once someone is connected. A secure design ensures wireless and remote access do not create shortcuts into sensitive networks, and a secure process ensures accounts are not shared, privileges are not overly broad, and access is time-bounded when possible. Vendor and support access deserves special attention because it combines remote connectivity and elevated privilege with shared responsibility, which is a recipe for gaps unless guardrails exist. The must-know here is that access should be accountable, limited, monitored, and removed when no longer needed. When wireless and remote access are controlled thoughtfully, they become managed capabilities instead of permanent back doors.

Protecting payment pages is a must-know because malicious scripts can steal data at the exact moment customers enter it, bypassing many other protections. This threat is especially dangerous because it can keep the payment process functioning while silently exfiltrating sensitive values, which means it can persist unnoticed if the organization lacks visibility into what code runs on checkout pages. The most important concept is script governance, meaning you know what scripts are present, why they are present, where they come from, and how changes are approved. Third-party scripts, analytics tools, and tag systems can create long chains of trust, and long trust chains are where compromise often enters indirectly. The must-know is that payment pages should be treated as high-sensitivity zones where changes are controlled more strictly than ordinary website content. Monitoring must detect unexpected changes or new code, and the organization must be ready to respond quickly when drift is detected. When you treat the browser execution environment as part of the payment system, you naturally build controls that reduce skimmer opportunities.

Data minimization is a must-know because the safest sensitive data is the data you never store. Retention and purging reduce scope and reduce risk by shrinking the footprint of the Primary Account Number (P A N) and other sensitive values across databases, logs, backups, exports, and side systems. Beginners often think retention is only a legal topic, but it is also a security lever that determines how many systems must be protected and for how long. Purging must be routine and aligned across primary stores and backups, or else old data persists quietly and undermines scope reduction claims. Another must-know is that human workflows create data copies, like spreadsheets and tickets, so minimization succeeds only when business processes are designed to avoid needing full sensitive values in the first place. Masking helps by limiting what people see during routine tasks, while stronger protections like encryption reduce exposure if storage is accessed improperly. The core idea is to make sensitive data rare, short-lived, and confined to a small controlled zone.

Encryption and tokenization are must-knows because they are often chosen for the wrong reasons, and wise choices shape scope and operational burden. Encryption protects confidentiality by using keys, but it does not automatically reduce the sensitive footprint because encrypted sensitive values can still be widely stored and widely accessible if decryption capability is broad. Tokenization replaces sensitive values with stand-ins that are not mathematically reversible on their own, which can reduce where real values appear, but it concentrates risk into the mapping system and the pathways that can restore original values. The must-know is that both approaches rely on access discipline and monitoring, because the value of encryption is limited by key governance, and the value of tokenization is limited by how tightly de-tokenization is controlled. Beginners sometimes assume one technique is always better, but the wise approach is to match the technique to business needs, such as how often the full value must be retrieved. Combining approaches can work well if boundaries are clear, but layering them without clear data handling rules can create confusion and drift. A strong environment is one where the data model is consistent and the proof story is sustainable.

Certificates and Transport Layer Security (T L S) are must-knows because weak lifecycle management creates outages and risky workarounds. Certificates prove endpoint identity and support encrypted connections, and those connections protect payment data in transit across networks and services. Expiry drama happens when certificates are treated as a one-time install rather than as a managed asset with ownership, inventory, and predictable renewal routines. The must-know is that secure connections are only reliable if the organization knows what certificates exist, where they are used, when they expire, and how renewals are coordinated without breaking dependencies. A brittle environment often responds to certificate failures by disabling validation or weakening security settings just to restore service, which can create a longer-term exposure far worse than the original expiry problem. Lifecycle discipline also supports incident response, because the ability to rotate certificates quickly is part of responding to suspected compromise. When certificate management is organized, encryption in transit remains dependable rather than accidental, and dependable controls are the ones people do not bypass under pressure.

Evidence handling and reporting are must-knows because PCI work is ultimately evidence-based, and the evidence itself can become sensitive and risky if handled casually. Evidence can contain diagrams, system names, configuration details, and sometimes sensitive data fragments, so it must be protected for confidentiality and integrity, and organized for traceability. A systematic evidence approach collects only what is needed, stores it in controlled repositories, and retains it only as long as required, so the assessment process does not create a shadow data store. Report writing also matters because examiners dislike vagueness, generic templating, inconsistent scope narratives, and conclusions that are stronger than the evidence supports. The must-know is that a good Report on Compliance (R O C) tells a clear story that connects requirements to tests to evidence to conclusions, with consistent terminology and defensible sampling. When evidence and writing discipline are strong, the assessment becomes calmer because questions can be answered quickly and transparently. When evidence and writing are weak, the assessment becomes stressful because everything feels like it depends on memory and improvisation.

As we close this lightning recap, the big picture to hold onto is that PCI security is not a collection of isolated requirements, but a system of reinforcing habits that keep a payment environment controlled, observable, and resilient to drift. Scope defines what matters, segmentation and access control define who can reach what, and logging and time synchronization make it possible to know what happened when something goes wrong. Change control and integrity monitoring keep the environment from slowly becoming something nobody intended, while wireless, remote access, and vendor access guardrails prevent convenience paths from becoming permanent weak links. Payment page protection, data minimization, and smart choices between tokenization and encryption reduce exposure at the moments and places that matter most. Certificate lifecycle discipline keeps encryption in transit reliable, and systematic evidence handling keeps the proof of all these controls safe and usable. When you connect these ideas, the standard stops feeling like a checklist and starts feeling like a set of design principles that keep payment systems stable under real-world pressure.