Episode 51 — Build Clear Shared Responsibility Matrices That Work.

In this episode, we’re going to focus on a skill that separates smooth, confident PCI work from frustrating misunderstandings: building a shared responsibility matrix that people can actually use. When payment environments involve cloud platforms, managed service providers, software vendors, and internal teams with different priorities, it becomes surprisingly easy for critical controls to fall into the cracks. One group assumes another group is handling logging, patching, key management, or access reviews, and nobody discovers the gap until an incident happens or an assessment forces the questions into the open. The purpose of a shared responsibility matrix is to turn vague assumptions into explicit assignments that can be tested, monitored, and evidenced. For a Qualified Security Assessor (Q S A), this is not a paperwork exercise, because unclear responsibility makes evidence hard to produce and makes real security controls unreliable. For a brand-new learner, the best way to understand this topic is to treat it as a translation layer between technology and accountability, where every control has an owner, an operator, and a proof that it is happening in real life.

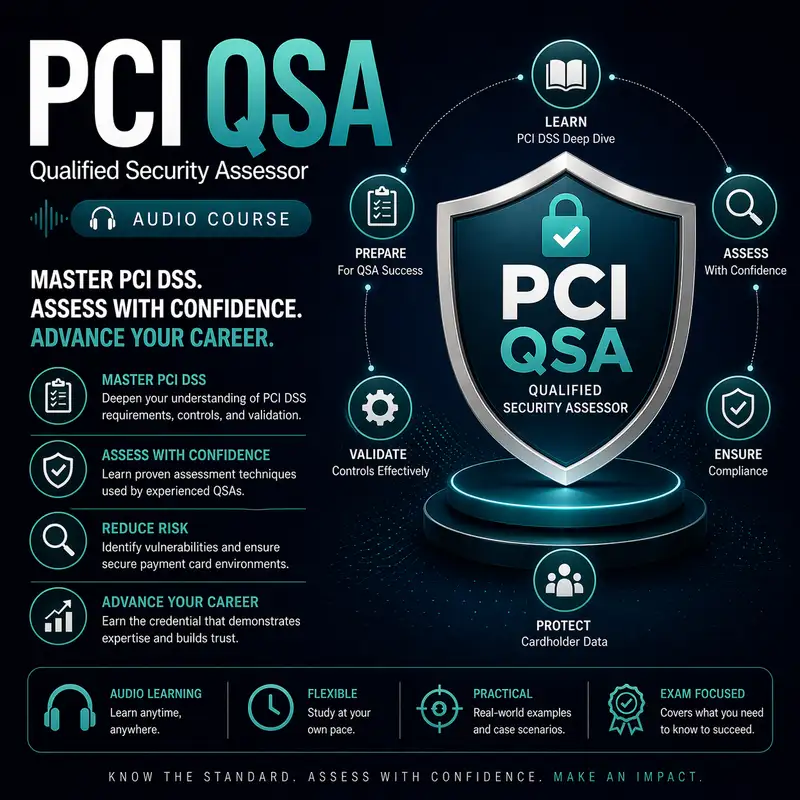

Before we continue, a quick note: this audio course is a companion to our course companion books. The first book is about the exam and provides detailed information on how to pass it best. The second book is a Kindle-only eBook that contains 1,000 flashcards that can be used on your mobile device or Kindle. Check them both out at Cyber Author dot me, in the Bare Metal Study Guides Series.

Shared responsibility becomes tricky in payment environments because controls are not isolated tasks, and each control usually has multiple moving parts across multiple domains. Take logging as a simple example: one party may generate logs, another party may transport them, another party may store them, and another party may review them and respond to alerts. If you only assign logging to a single owner, you miss the reality that logging is a chain, and chains break at the handoff points. The same pattern appears with patching, where one party may maintain the operating system, another party may maintain the application, and another party may approve downtime windows. It also appears with network segmentation, where a cloud provider may supply the network primitives, but the customer defines what is allowed, and an operations team implements and verifies the design. A shared responsibility matrix works when it describes responsibility at the level of these real handoffs, not just at the level of high-level categories. When it fails, it tends to be because it is too abstract, too optimistic, or too disconnected from how work actually gets done across teams and vendors.

A practical way to define the problem is to recognize that shared responsibility is not the same as shared ownership, and those words often get mixed up. Shared responsibility means multiple parties contribute to the outcome, but it does not mean everyone is equally accountable for the result. If everyone is accountable, nobody is accountable, and the control becomes a group assumption rather than a managed activity. In payment environments governed by the Payment Card Industry Data Security Standard (P C I D S S), accountability must be crisp enough that a Q S A can trace a control from requirement to implementation to evidence. That trace requires named roles, defined processes, and known artifacts, not just a statement that the vendor handles it. The matrix should answer questions like who performs the task, who verifies it occurred, who approves changes, and who retains evidence. Beginners sometimes believe responsibility is implied by contracts, but contracts often describe service outcomes broadly and do not map cleanly to security controls unless you translate them deliberately. The shared responsibility matrix is that translation, and it needs to be written in plain, testable language.

The best shared responsibility matrices start with scope clarity, because you cannot assign responsibility for controls until you agree on what systems and data are in scope. In PCI terms, you need a clear definition of the Cardholder Data Environment (C D E) and the payment flow boundaries, including any connected systems that can impact security. You also need clarity on where the Primary Account Number (P A N) is processed, transmitted, or stored, because that determines where the strongest controls must be applied and who must be involved. Scope is often the first hidden risk in shared responsibility because organizations may assume a hosted service is out of scope while still allowing it to influence in-scope systems. Another common issue is assuming that a cloud platform takes responsibility for encryption simply because it offers encryption features, when in reality the customer must enable and manage them. A Q S A will often find that confusion about scope and data flow leads directly to confusion about responsibility, because teams cannot assign tasks to systems they have not clearly identified. A matrix that begins with a shared understanding of the environment reduces this confusion and makes the rest of the assignments more stable.

Once scope is clear, the matrix needs a consistent structure so that each control is described the same way, which prevents gaps created by inconsistent thinking. A simple and durable approach is to define each control outcome and then assign roles for who does the work, who approves it, and who verifies it. Some organizations use a Responsibility Assignment Matrix (R A C I) concept to make these roles explicit, but the specific label is less important than the clarity it creates. What matters is that every control has an operator who performs the routine activity, an authority who can approve exceptions or changes, and a verifier who can confirm the control is working and can produce evidence. For example, if the control is quarterly access review, the operator might be the system owner, the authority might be the business owner who approves access decisions, and the verifier might be security or compliance who checks completion and retains records. The matrix should also capture frequency and triggers, because responsibility is meaningless if nobody knows when a task must occur. A Q S A will look for this operational clarity because it predicts whether the organization can provide evidence consistently throughout the year, not just during the assessment window.

Shared responsibility matrices fail most often around gray-zone controls, which are controls that cross boundaries between providers and customers or between internal teams. Vulnerability management is a classic example. A managed service provider may patch the underlying operating system, but the customer may still be responsible for patching the application, testing changes, and ensuring that emergency fixes do not break payment functionality. If the matrix simply says the provider handles patching, the organization may miss the application layer entirely. Another gray-zone area is identity and access management, where a cloud provider may secure the identity platform itself, but the customer must configure policies, manage accounts, enforce multi-factor authentication, and remove access when staff leave. Logging and monitoring are another gray zone, because providers may offer log sources and storage services, but the customer must decide what to collect, how long to retain it, and who reviews alerts. A strong matrix does not hide these gray zones; it highlights them and breaks them into sub-responsibilities that reflect the real workflow. When a Q S A sees that level of detail, it is a signal that the organization has grappled with reality instead of relying on assumptions.

Another reason matrices fail is that they confuse responsibility for capability, meaning they assign a control to whoever has the technical capability, not to whoever is accountable for the outcome. For example, an infrastructure team may have the capability to configure network rules, but a security team may be accountable for ensuring segmentation protects the C D E. If the matrix assigns segmentation entirely to infrastructure because they can click the settings, then security may not verify the design, and operations may not document the rationale. Over time, the environment can drift, and nobody notices until an assessment reveals the segmentation story is inconsistent. The matrix should reflect that capability and accountability are different dimensions. Capability answers who can do it, while responsibility answers who must ensure it is done correctly and consistently. This distinction becomes even more important with vendors, because vendors may have deep capabilities, but the merchant is still accountable for PCI outcomes. The matrix should make that accountability visible by requiring the merchant to verify vendor claims, request evidence, and perform oversight rather than assuming the vendor’s controls automatically satisfy the merchant’s obligations.

A matrix that works also needs to be anchored to evidence, because PCI assessments are ultimately evidence-based. For every responsibility assignment, the matrix should imply what proof will exist that the work was performed, where that proof is stored, and who can retrieve it. Evidence can include review records, change approvals, configuration baselines, monitoring reports, incident tickets, and attestation documents from a Third Party Service Provider (T P S P). The key is that evidence must be accessible and consistent, not scattered across personal inboxes or informal chat messages that cannot be retained properly. Beginners sometimes assume evidence is something you generate during an audit, but the healthier model is that evidence is a byproduct of normal operations. If the matrix assigns a monthly task, it should also establish where the monthly record lives and who checks that it exists. When evidence expectations are built into the matrix, teams begin to operate in a way that naturally produces what a Q S A needs to validate controls. That reduces last-minute scrambling and makes security routines more sustainable.

Shared responsibility matrices become especially important in cloud and managed environments where the phrase the provider handles security can mask the true distribution of tasks. Providers often secure the physical infrastructure, but customers secure configurations, access, data handling, and most of the operational controls that determine whether the C D E is protected. This is where beginners can get tripped up by the difference between shared responsibility as a general concept and a specific matrix that assigns tasks. A general concept might say the provider secures the platform and the customer secures what they build on it, but that statement is too broad for PCI validation. The matrix must get specific about what that means for key areas like network controls, encryption key ownership, log retention, vulnerability management, and incident response coordination. It must also account for the reality that customers often use multiple providers, meaning different parts of the payment flow have different responsibility splits. A Q S A will want to see that the organization has mapped each relevant service to specific control responsibilities and has not assumed one generic model applies everywhere.

A strong matrix also anticipates operational stress, because many responsibility failures occur during incidents, outages, and emergency changes. When something breaks, teams move fast, vendors jump in, and the priority becomes restoring service. In those moments, security controls can be bypassed unless responsibilities are clear and routines are practiced. The matrix should define who can authorize emergency access, who must monitor vendor sessions, who captures after-action records, and who ensures temporary changes are reversed. It should also define communication expectations, such as how the organization is notified when a vendor performs a high-impact action. This is where a Service Level Agreement (S L A) can support the matrix by establishing timeframes for response, reporting, and evidence delivery. The matrix does not replace contracts, but it translates the contract into operational accountability that the teams can follow during pressure. For beginners, the key insight is that a matrix that only works on calm days is not a matrix that works; real validation demands that controls remain dependable when the environment is under stress.

Another hidden risk in shared responsibility is the tendency to treat responsibility as static, even though environments change constantly. New services are adopted, teams reorganize, vendors change personnel, and payment flows evolve with new features. If the matrix is not maintained, it becomes a historical artifact that no longer matches reality, which can be worse than having no matrix because it creates false confidence. A working matrix has an owner and a review cadence, and it is updated whenever the environment changes in ways that affect control coverage. That update discipline should be tied to change management so that new systems, new integrations, and new vendors trigger a responsibility review. A Q S A will often detect stale matrices by comparing them to the current architecture and noticing missing components or outdated service names. For beginners, it helps to think of the matrix as a living map. If the city adds roads and you never update the map, you will get lost, and in security terms, getting lost means not knowing who is responsible for critical controls.

It is also important that the matrix is written for the people who do the work, not only for auditors. Many matrices fail because they are filled with compliance language that sounds correct but does not guide daily decisions. A strong matrix uses clear, operational phrasing that a system owner or support technician can apply. It should answer common practical questions, such as who opens a ticket when a vulnerability is discovered, who approves the remediation plan, who validates completion, and who retains proof. It should also specify escalation paths for disagreements, because shared responsibility often creates conflicts, such as when a vendor says a control is not in their scope while the customer believes it is. Without an escalation path, those conflicts linger and the control remains unowned. A Q S A will view that as a risk because unresolved responsibility disputes tend to become long-term gaps. For a beginner, the lesson is that clear responsibility is a form of risk reduction because it prevents delays and prevents silence when something must be done.

As we wrap up, remember that building a shared responsibility matrix that works is about creating operational truth, not creating a pretty document. It starts with clear scope and a shared understanding of where payment data flows and where the C D E begins and ends. It assigns responsibility in a consistent structure that distinguishes doing, approving, and verifying, and it breaks down gray zones into specific sub-tasks that match reality. It anchors assignments to evidence so routine work produces routine proof, and it anticipates real-world pressure by defining how responsibilities work during incidents and emergency changes. It stays alive through ownership and regular updates as the environment evolves, and it uses plain language so the people responsible can actually follow it. For a Q S A, a good matrix reduces uncertainty and makes validation smoother because it turns a complex multi-party environment into a set of traceable, testable responsibilities. When you can point to a control, point to an owner, and point to consistent evidence, you have replaced assumptions with guardrails, and that is exactly what prevents shared responsibility from becoming shared confusion.