Episode 43 — Implement File Integrity Monitoring That Catches the Drift.

In this episode, we’re going to take something that can sound mysterious at first and make it feel practical and grounded: File Integrity Monitoring (F I M) in a payment environment. If you are new to cybersecurity, the phrase can sound like it belongs only to advanced teams, but the core idea is actually simple. Important files and settings should not change without a clear reason, and when they do change, the organization should know quickly and be able to explain the change. In a PCI context, this matters because systems in the Cardholder Data Environment (C D E) are supposed to behave in stable, predictable ways. Attackers love instability because it creates cover, and operators fear instability because it causes outages, incidents, and long troubleshooting loops. File integrity monitoring sits in the middle as a kind of early warning system that says something moved, something shifted, or something is no longer what we believed it was yesterday. The goal is not to drown the team in alerts about harmless changes, but to catch meaningful drift, meaning the slow and often unnoticed divergence between the intended secure state and the messy reality of day-to-day operations.

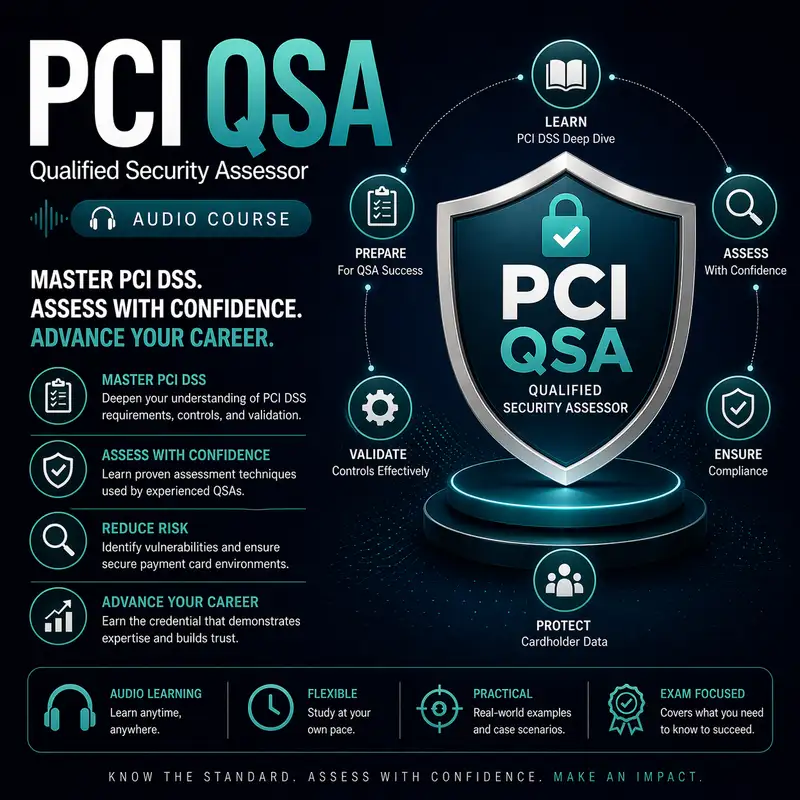

Before we continue, a quick note: this audio course is a companion to our course companion books. The first book is about the exam and provides detailed information on how to pass it best. The second book is a Kindle-only eBook that contains 1,000 flashcards that can be used on your mobile device or Kindle. Check them both out at Cyber Author dot me, in the Bare Metal Study Guides Series.

To understand why file integrity monitoring matters, it helps to understand the difference between a system being up and a system being trustworthy. A server can be running, responding to requests, and producing the expected screens or transactions, while still being quietly altered underneath. Those alterations can be accidental, like a well-meaning admin changing a configuration and forgetting about it, or they can be malicious, like an attacker inserting code that skims data or opens a hidden access path. In payment environments, the risk is especially sharp because small changes can have outsized consequences. A single modified script on a payment page can turn legitimate traffic into data theft. A changed configuration on a system that processes transactions can weaken encryption, disable logging, or loosen access controls. File integrity monitoring is the control that helps you notice these changes early, before they become normalized and forgotten. As a QSA, you are not only asking whether a tool is installed; you are asking whether the organization has a reliable way to detect, evaluate, and respond to unexpected changes in places that matter most.

When people first hear file integrity monitoring, they often assume it means monitoring every file on every system all the time, which would be impractical and would produce enormous noise. A smarter way to think about it is to monitor the right files for the right reasons. The right files are the ones that, if changed, could alter security posture, alter transaction behavior, or provide a foothold for an attacker. That includes operating system configuration files, application binaries, scripts, security agent configurations, and any files that define how the system authenticates users, handles network connections, or logs activity. It also includes content that a payment application serves to users if that content can be modified to include malicious code. The right reasons are tied to risk, and risk is tied to how likely the change is to happen legitimately and how damaging it would be if it happened illegitimately. File integrity monitoring becomes valuable when it is focused, purposeful, and connected to a process that helps people decide whether a change is expected or suspicious.

A core concept you need before you can validate file integrity monitoring is baselining. A baseline is a snapshot of what normal looks like for the files and settings you care about. Without a baseline, you cannot reliably say something changed. But baselines are not static forever, because environments evolve, patches update files, and releases introduce new versions. So baselining is less like taking one photo and more like maintaining an album that reflects the current approved state. Beginners sometimes misunderstand baselining and think it means locking a system into an unchanging state, which is not realistic. The real goal is to ensure changes that should happen are documented and approved, and changes that should not happen are detected quickly. File integrity monitoring supports that goal by constantly comparing the current state to the known approved state. When the two diverge, you get a signal. The quality of that signal depends heavily on how well the baseline is maintained and how well the monitoring is tuned to focus on meaningful targets.

Drift is the enemy that file integrity monitoring is meant to catch, and drift is usually not dramatic. Drift is slow. Drift is a configuration change made in a hurry and never reversed. Drift is a script patched manually on one server but not on the others. Drift is a permissions change that makes a file writable by more people than intended. Drift is a scheduled task added for troubleshooting that keeps running long after the incident is over. In payment environments, drift creates inconsistent security posture, which makes the environment harder to manage and easier to exploit. Attackers benefit when the environment is inconsistent because they can target the weakest instance of a system type, or hide in differences that defenders assume are normal. Operators suffer because inconsistencies make incidents harder to reproduce and fixes harder to roll out. File integrity monitoring helps by creating a trail of changes and by highlighting changes that happen outside the expected rhythm of patching and releases. The important lesson for new learners is that drift is not just a technical nuisance; it is a security problem and a validation problem.

To make file integrity monitoring work in practice, organizations need to define what counts as a critical file and what counts as an expected change. Critical files are often those that define access control, logging behavior, network posture, and application logic. Expected changes are changes that occur through approved processes, like a scheduled patch window or a planned release. The gap between critical and expected is where alerts matter. If a critical file changes during a planned release, the organization should still be able to explain it, but it may not require the same urgency as a critical file changing at an unusual time. If a critical file changes outside planned processes, that is a stronger sign something is wrong. This is where the phrase catches the drift becomes more than a slogan. Catching drift means catching changes that slip outside the discipline of the change pipeline. A QSA will look for evidence that alerts are not only generated but also investigated, triaged, and resolved in a documented way. If alerts exist but no one reviews them, the monitoring is decorative, not protective.

Another concept that matters is integrity versus availability. File integrity monitoring is not primarily about keeping systems running; it is about knowing whether the systems remain trustworthy. A system can be fully available and still be compromised. That is why integrity is a separate security property from availability, and why integrity monitoring needs its own attention. For beginners, it helps to think of integrity as the question, can we trust what this system is doing. If an attacker changes a small component of a payment process, the system can keep functioning and customers might never notice. But the integrity is broken because the system is doing something extra behind the scenes. File integrity monitoring creates a chance to catch that extra behavior early by noticing the change in the file that enabled it. In a PCI assessment, integrity controls are closely tied to the idea that payment environments should be stable and well-controlled. The more stable the environment, the easier it is to notice unusual change. The less stable the environment, the more noise you have, and noise is what attackers hide behind.

It is also important to understand that file integrity monitoring is only as strong as the protection of the monitoring itself. If an attacker can disable the monitoring, edit its configuration, or tamper with its records, then the organization might have a false sense of safety. A mature approach includes making the monitoring data hard to alter and making changes to monitoring configurations visible. This again connects to drift. Monitoring configurations can drift too, especially if someone turns off a noisy alert rather than fixing the underlying issue. From a validation standpoint, you want to see that the organization treats the monitoring system as part of the security boundary, not as an optional accessory. That means it is included in change control, it is included in access control, and it is included in logging and review. For a beginner, you do not need to memorize a specific architecture; you just need to understand the principle that a security control must be protected from the same threats it is meant to detect.

A common failure pattern is alert fatigue, where the monitoring is configured too broadly, generating constant notifications about harmless changes until people stop paying attention. Alert fatigue is a human problem caused by a technical misalignment. If everything is an emergency, nothing is an emergency. The right response is not to abandon monitoring, but to refine what is monitored and how alerts are categorized. That refinement starts with understanding the environment’s normal change rhythm and aligning monitoring to it. For example, if patching happens weekly, changes to certain system files during that window may be expected, but changes outside that window should stand out. If releases happen daily for a front-end system, then integrity monitoring may focus more on detecting unapproved modifications, unexpected file permission changes, or changes occurring outside the release pipeline. The key is not the frequency of change, but whether the change is controlled. A QSA will ask how the organization prevents alert fatigue and how it ensures alerts lead to action. When a team can show examples of alerts, investigations, and outcomes, it demonstrates that monitoring is alive.

File integrity monitoring also strengthens incident response, because it provides timelines and clues about what happened and when. If a suspicious change is detected, the organization can correlate it with other signals like authentication logs, remote access activity, and network anomalies. For new learners, this is where system time and logging discipline become linked to integrity monitoring. A file change alert that includes a time stamp is only useful if time stamps across systems can be trusted. An alert that identifies a changed file is only useful if the organization can tell who had access to that file and whether the change aligns with an approved change ticket. This is why controls in PCI are not isolated; they reinforce each other. File integrity monitoring is most effective when it sits inside a broader environment of good change control, strong access control, and reliable logging. When those supporting controls are weak, file integrity monitoring becomes harder to interpret, and attackers gain more room to hide. Validation means you look for the ecosystem around the monitoring, not just the monitoring itself.

When you think like a QSA validating file integrity monitoring, you are essentially validating three things: selection, detection, and response. Selection means the organization has identified the systems and files that matter and has a rationale for why they are monitored. Detection means the monitoring is actually working, meaning changes generate signals that can be reviewed and trusted. Response means there is a human process that takes those signals and turns them into action, whether that action is confirming an expected change, correcting drift, or investigating a possible compromise. For beginners, it can help to imagine a fire alarm. The alarm is valuable only if it is installed in the right places, if it reliably detects smoke, and if people know what to do when it sounds. A fire alarm that triggers constantly during cooking will be ignored, and a fire alarm that never triggers is useless. File integrity monitoring is similar. It should be tuned to detect real risk, and it should lead to consistent action. Evidence of action is often the difference between a compliant-looking environment and a truly controlled one.

As we wrap up, remember that file integrity monitoring is fundamentally about trust and drift. Payment environments need to remain in a known, approved state, and reality constantly tries to pull them away from that state through patches, fixes, workarounds, and sometimes attacks. File integrity monitoring is one of the controls that pulls the environment back toward truth by making change visible. It is not there to shame people for changes; it is there to prevent silent changes from becoming permanent weak points. When implemented thoughtfully, it reduces chaos because it creates a reliable signal for when the environment is drifting away from its intended design. When validated well, it gives a QSA confidence that the organization can detect and explain changes that matter, and that it will notice when something unusual happens. If you keep the mental model of baselines, focused monitoring, and disciplined response, you will be able to understand what good file integrity monitoring looks like without getting lost in tool details, and you will be able to spot the weak patterns that allow drift to turn into real payment risk.