Episode 40 — Align Testing Frequencies and Triggers to Reality.

In this episode, we’re going to make testing frequency feel like a practical, defensible rhythm rather than an arbitrary calendar habit that everyone follows because it has always been done that way. Security testing exists to confirm that controls are working and to catch drift before it becomes a breach, but testing is only effective when it happens often enough to match how quickly your environment changes and how quickly attackers can exploit new weaknesses. Beginners sometimes assume the right answer is to test everything constantly, yet that approach can overwhelm teams and produce more noise than insight. Other teams do the opposite and test rarely, which creates long blind periods where serious problems can grow unnoticed. Aligning frequencies and triggers to reality means you pick a cadence for each type of testing based on risk, exposure, and change, and you also define event-based triggers that force testing when something meaningful changes. The goal is to avoid both complacency and chaos by building a testing program that fits the organization’s actual operations. When this alignment is done well, testing becomes part of routine operations and the organization develops confidence based on evidence rather than hope.

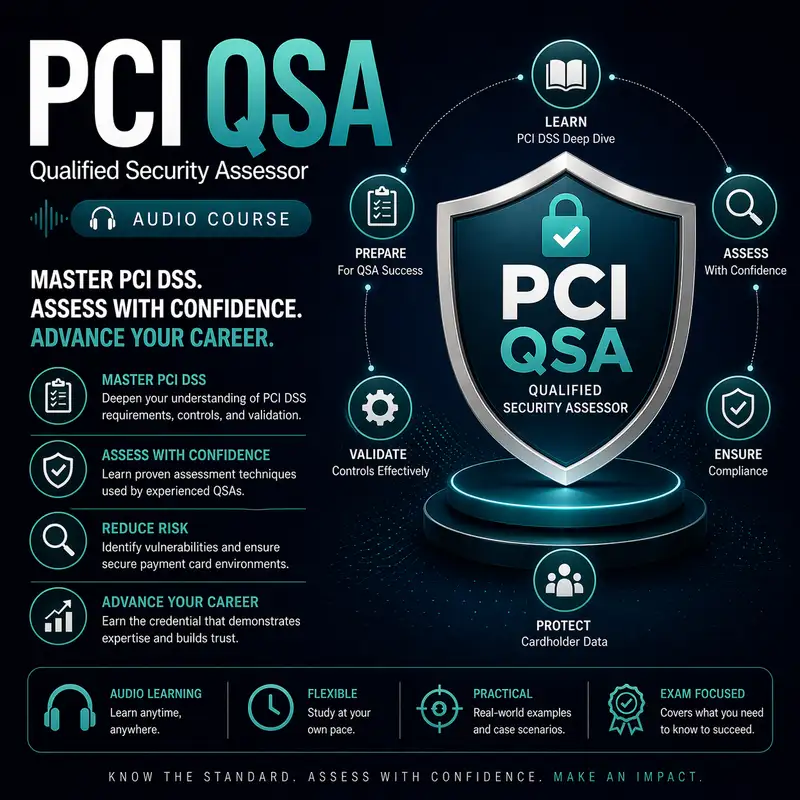

Before we continue, a quick note: this audio course is a companion to our course companion books. The first book is about the exam and provides detailed information on how to pass it best. The second book is a Kindle-only eBook that contains 1,000 flashcards that can be used on your mobile device or Kindle. Check them both out at Cyber Author dot me, in the Bare Metal Study Guides Series.

A strong way to start is to understand why fixed calendars are not enough on their own, even though they can be useful. Calendars create predictability, which helps teams plan time, coordinate resources, and maintain consistency. However, real environments do not change on a predictable schedule, and attackers do not wait for your next quarterly test. A new vulnerability may become widely exploited within days, and an emergency change may introduce exposure overnight. If you rely only on a calendar, you can miss the moments when risk spikes and testing is most needed. Beginners often think of testing as a scheduled event, but mature programs treat testing as both scheduled and reactive, with triggers that respond to change. A testing program aligned to reality uses calendars for routine assurance and triggers for risk spikes. This dual approach makes testing more efficient because it focuses attention when it matters most. Over time, that efficiency reduces fatigue while improving security.

To align testing properly, you need to understand the main categories of security testing that organizations rely on, because each category answers a different question. Vulnerability scanning asks what known weaknesses exist in systems and exposures. Penetration testing asks whether realistic attack paths can lead to compromise, often by chaining issues together. Configuration validation asks whether systems are built and operated according to secure baselines. Segmentation testing asks whether network boundaries actually block and allow traffic as intended. Monitoring validation asks whether logs and alerts are functioning so incidents can be detected. Secure software lifecycle testing asks whether application changes are being evaluated for security impact before release. Beginners sometimes treat all testing as interchangeable, but each test type has its own optimal frequency and triggers. Aligning to reality means you do not choose a single rhythm for everything; you choose rhythms based on how quickly each risk surface changes. When you map testing types to risk surfaces, frequency decisions become logical rather than political.

Asset criticality is one of the strongest anchors for deciding frequency, because the most important systems deserve the most consistent attention. In payment environments, systems in the cardholder data environment and systems that control them, like identity services, key management systems, and administrative consoles, generally require more frequent validation. High-value internet-facing systems also deserve frequent attention because exposure is constant and attackers can reach them easily. Lower-value internal systems may still need testing, but the frequency can be lower if they are well segmented and have limited paths into sensitive areas. Beginners sometimes assume fairness means equal testing, but risk-based management means you allocate effort where it reduces the most danger. Frequency decisions should reflect the potential impact of failure, because a missed weakness on a critical system is more costly than a missed weakness on a low-impact system. When teams accept this principle, frequency alignment becomes a shared strategy instead of a recurring argument. That shared strategy is what makes the testing program sustainable.

Change rate is another major reality factor, because systems that change often should be tested more often. A web payment application that receives frequent updates has more opportunities to introduce new vulnerabilities, new data flows, or new logging gaps. A stable infrastructure component that rarely changes may still need periodic testing, but it may not require the same frequency as a rapidly evolving application. Beginners often underestimate how much change influences risk, because changes feel routine and harmless, but many security issues are introduced through normal changes, not through malicious intent. Aligning testing to reality means you track where change is happening and tie additional testing to those change zones. This includes changes to code, configuration, network rules, integrations, and third-party services. It also includes the introduction of new assets, such as new domains, new public endpoints, or new administrative access paths. When testing frequency follows change, testing becomes a protective wrapper around evolution rather than a lagging response after problems appear.

Triggers are the key mechanism that ties testing to real events, and understanding triggers helps beginners see how a program stays responsive without testing everything all the time. A trigger is an event that requires a test or a validation activity because it indicates risk has increased or the environment has changed in a meaningful way. For example, a major change to a payment flow should trigger validation that data is still protected and that sensitive values are not logged or stored. A new internet-facing system should trigger external scanning and configuration validation before it is considered stable. A significant change to firewall rules or routing should trigger segmentation validation because boundaries may have shifted. A change to authentication or access control should trigger testing that ensures privileges and sessions behave as intended. A newly disclosed vulnerability in widely used software should trigger targeted scanning or patch verification on affected assets. Beginners sometimes think triggers create extra work, but triggers prevent the worst kind of work, which is emergency response after undetected drift.

Testing frequency should also be influenced by how quickly detection and response can operate, because strong monitoring can reduce the window of unknown risk. If monitoring is mature, alerts are actionable, and response is fast, the organization may be able to tolerate slightly longer intervals between certain tests because anomalies are more likely to be detected quickly. However, monitoring is not a substitute for preventative testing because detection does not prevent the weakness from existing. The best alignment treats monitoring and testing as complementary, with monitoring catching unexpected issues and testing verifying expected controls. Beginners sometimes assume that monitoring can replace testing, but monitoring tells you when something might be wrong, while testing tells you whether controls are still right. Frequency decisions should consider whether monitoring is proven to be effective and whether logs and alerts cover the high-risk areas reliably. If monitoring is weak, testing must compensate with more frequent validation because blind spots increase uncertainty. Aligning to reality means being honest about detection maturity rather than assuming it is strong.

Another reality factor is the difference between internal and external exposure, because externally reachable assets tend to require more frequent testing due to constant attacker pressure. External scanning is typically more frequent because it is designed to catch visible weaknesses that attackers can exploit quickly. Internal testing, such as internal scanning and segmentation checks, may be tied more closely to changes and to periodic schedules because internal reachability depends on initial compromise pathways. However, internal systems can still be high risk if they provide administrative control, store sensitive data, or serve as bridges into the cardholder data environment. Beginners sometimes treat internal systems as low risk by default, but internal compromise is common through phishing and credential theft, so internal testing must still be regular. Aligning frequency means weighting external exposure heavily while also respecting internal high-value assets. It also means ensuring that claims about segmentation are tested, because segmentation is a major reason internal frequency decisions can be reduced safely. When segmentation is proven and maintained, internal testing can be more focused; when it is not, internal testing must be more frequent and broader.

Third-party changes are another trigger area that often surprises teams because vendors can change systems in ways the organization does not see directly. A payment processor might update an integration method, a hosting provider might change underlying infrastructure behavior, or a web content service might modify how scripts load. These changes can affect data flow, security controls, and exposure, even if the organization did not deploy code itself. Aligning testing to reality means treating third-party changes as triggers for validation, especially when they affect payment flows or customer-facing pages. Beginners sometimes assume vendors will announce every impactful change clearly, but communication can be imperfect, and the business still bears the risk. This is why vendor management and change awareness are part of a realistic testing program. When vendor changes are tracked and tied to validation steps, surprises decrease and trust increases. Testing aligned to reality accounts for the fact that your environment includes systems you do not fully control.

Evidence and governance are what keep frequency and trigger decisions from becoming inconsistent, because people need to know what is expected and what proves it happened. A mature program documents the testing schedule and the trigger rules, and it defines ownership for initiating tests and reviewing results. It also tracks completion and remediation so tests do not become repeated measurements without improvement. Beginners sometimes think governance is separate from testing, but governance is what ensures testing actually occurs and results lead to action. Governance also helps resolve conflicts, such as when teams want to delay a test because of business pressure, by providing clear escalation and decision authority. When frequency and triggers are documented and owned, the program becomes resilient to turnover and shifting priorities. Evidence produced by testing also supports compliance and assurance, because it shows that controls are verified regularly and after meaningful changes. Aligning to reality includes aligning to accountability, because without accountability, even the best designed schedule will be ignored under pressure.

A common beginner misconception is that more testing always means better security, but excessive testing can create noise and slow remediation by overwhelming teams with findings they cannot address quickly. Realistic alignment means choosing a cadence that produces a manageable flow of results that can be triaged, remediated, and verified. If tests produce more findings than the organization can handle, issues will pile up and risk will remain. A better approach is to focus high-frequency testing where it matters most and to ensure that remediation capacity matches test output. This also means tuning tests to reduce false positives and to focus on meaningful signals. Beginners sometimes treat testing output as a scoreboard, but the true measure is whether testing drives risk reduction. If testing is aligned well, the organization steadily improves, and the number of high-risk findings decreases over time. That is what a sustainable program looks like, and sustainability is part of reality.

As you bring all of this together, aligning testing frequencies and triggers to reality is about matching your assurance efforts to the pace of change and the shape of risk in your environment. You define the major testing categories and understand that each one answers a different question, so each one deserves its own rhythm. You anchor frequency decisions in asset criticality and exposure, giving the most attention to systems that handle or control sensitive payment functions. You tie additional testing to change rate, recognizing that fast-changing systems need more frequent validation because drift and new weaknesses appear there first. You define triggers for meaningful events, such as payment flow changes, new internet-facing assets, segmentation rule changes, authentication changes, and newly disclosed vulnerabilities, so testing responds to real risk spikes. You consider monitoring maturity, third-party change realities, and remediation capacity so the program remains both effective and sustainable. You document the schedule and trigger rules with clear ownership so testing happens consistently and produces defensible evidence. When testing is aligned to reality in this way, it stops being a ritual and becomes a reliable engine for continuous security assurance.