Episode 35 — Monitor Effectively With SIEM, Alerts, and Triage.

In this episode, we’re going to make monitoring feel like a calm, repeatable discipline instead of a frantic search for needles in an endless haystack. A lot of organizations collect logs, buy monitoring tools, and still feel blind when something goes wrong, because effective monitoring is not primarily about owning technology, it is about building a process that turns signals into decisions. In payment environments, monitoring matters because attackers often spend time inside systems before anyone notices, and every hour of delay can increase the impact of a breach. The phrase monitor effectively with a S I E M, alerts, and triage points to three connected ideas that must work together. Security Information and Event Management (S I E M) is the place where signals are collected and correlated, alerts are the rules and outputs that call attention to potential risk, and triage is the human process of deciding what to do next. Beginners often focus on the first part, the tool, and assume the rest will fall into place, but the tool only amplifies whatever process exists. When process is disciplined, the S I E M becomes powerful; when process is weak, the S I E M becomes loud noise.

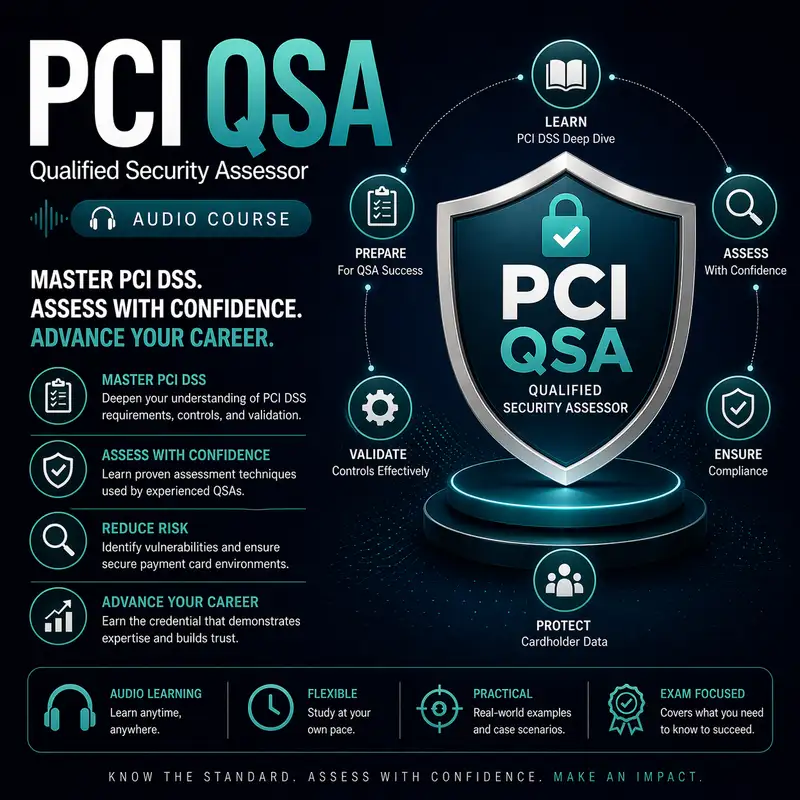

Before we continue, a quick note: this audio course is a companion to our course companion books. The first book is about the exam and provides detailed information on how to pass it best. The second book is a Kindle-only eBook that contains 1,000 flashcards that can be used on your mobile device or Kindle. Check them both out at Cyber Author dot me, in the Bare Metal Study Guides Series.

To begin, it helps to define what a S I E M actually does in practical terms. A S I E M ingests events from many sources, such as servers, network devices, applications, authentication systems, and security controls, then stores and organizes those events so they can be searched, correlated, and used for alerting. The value of centralization is that you can see patterns that are invisible when logs are scattered across separate systems. For example, a suspicious login might look harmless on its own, but when correlated with a new administrative action and unusual outbound traffic, it becomes a meaningful story. Beginners sometimes assume correlation is automatic magic, but correlation only works when logs are consistent, time is synchronized, and key fields like user identity are captured reliably. The S I E M is also valuable for investigations because it can preserve a history of events and make it possible to reconstruct timelines. In payment environments, that history matters because you may need to understand exactly how an incident unfolded and whether cardholder data was accessed. When you treat the S I E M as a system for building narratives from events, its purpose becomes clearer and its limitations become easier to manage.

Effective monitoring begins long before alerts, because if the wrong data is collected or data is collected inconsistently, you cannot build trustworthy conclusions. Sources must be chosen deliberately, focusing first on systems that store, process, or transmit cardholder data and the systems that control them. You also want events from identity systems, privileged access paths, key management systems, and network boundaries, because attackers often target those for leverage. Beginners sometimes think every log source is equally valuable, but some sources provide strong security signal and others produce mostly operational noise. You want to prioritize authentication events, privilege changes, access to sensitive data, configuration changes, and security tool status, because these are the events that most often indicate intrusion or control failure. You also want to collect events that show whether monitoring itself is healthy, like whether log forwarding is failing or agents are offline, because a silent gap in logging can hide the most important activity. When collection is designed well, alerts and triage become much easier, because you are working with a coherent picture instead of scattered fragments.

Time and identity are two details that sound mundane but decide whether monitoring works at all. If timestamps are inconsistent across systems, a timeline becomes confusing and correlation becomes unreliable. If user identities are recorded inconsistently, it becomes hard to tell whether two events involve the same actor. Effective monitoring requires synchronized time and clear identity mapping so you can confidently tie actions to accounts and systems. Beginners often assume time is just time, but in distributed environments, devices can drift, and even small drift can scramble event ordering during fast-moving incidents. Identity can be tricky too, because the same person might appear as different usernames across systems, or systems might log only partial information. A disciplined monitoring program addresses these issues early by establishing time synchronization and by ensuring logs contain usable identity fields. This is part of why monitoring is a program, not a product, because it requires consistent standards across many teams and systems. When time and identity are stable, alert rules become more accurate and investigations become far less frustrating.

Alerts are where many monitoring programs fail, not because alerting is unimportant, but because poor alert design creates either silence or constant noise. An alert is essentially a decision rule that says when certain patterns or thresholds occur, someone should pay attention and possibly take action. Beginners often assume more alerts means better security, but too many alerts leads to fatigue, and fatigue leads to ignored alerts. Effective alerting starts with a clear definition of high-value signals, such as unusual privileged activity, repeated authentication failures, changes to security settings, and access to sensitive systems from unexpected sources. These alerts should be tied to real risk and designed to be actionable, meaning the person receiving the alert can quickly decide what to do next. Alerts should also include context, like the affected user, system, time, and relevant surrounding events, because context reduces the time to triage. When alerts are designed around risk and action, they become a reliable early warning system rather than a constant distraction.

Triage is the bridge between an alert and an incident response, and it is where monitoring becomes real human work. Triage means assessing whether an alert indicates a true security issue, how severe it might be, and what immediate steps should be taken. The purpose of triage is not to prove the full story instantly, but to make a safe decision quickly, such as whether to contain, escalate, or close as benign. Beginners sometimes imagine triage as a deep forensic investigation, but in practice triage is often about rapid judgment based on limited evidence. A well-run triage process uses playbooks that define common alert types, typical causes, and the first actions to gather context. It also defines what counts as urgent and what can be investigated on a slower timeline. Without triage discipline, teams either overreact to every alert, causing unnecessary disruption, or underreact, allowing attackers time to expand. Effective monitoring requires triage to be predictable, calm, and repeatable, because that is what allows teams to respond consistently under pressure.

To make triage practical, you need to think about severity and prioritization in a way that matches real risk rather than dramatic language. Severity often depends on the asset involved, the privilege level of the account, and the plausibility that the activity is malicious. An alert on a test system with no sensitive data is different from an alert on an administrative account in the cardholder data environment. An alert indicating a failed login attempt is different from an alert indicating a successful login followed by a privilege change. Beginners sometimes interpret any security alert as equally urgent, which can create burnout and poor decision-making. A disciplined monitoring program defines what high risk looks like and uses that definition to prioritize attention. It also sets expectations for response times, such as immediate action for high-risk privileged anomalies and scheduled review for low-risk noise. When prioritization is clear, triage becomes easier because decisions are guided by agreed standards rather than by emotion.

False positives are inevitable, and the way you handle them determines whether monitoring improves or deteriorates over time. A false positive is an alert that triggers even though no security issue exists, and too many false positives make people distrust the system. Effective monitoring treats false positives as tuning opportunities rather than as reasons to abandon alerting. The team reviews why the alert triggered, whether the rule is too broad, and what additional conditions could make it more accurate. Sometimes false positives reveal that normal behavior is not well understood, which means the environment lacks clear baselines. Sometimes they reveal that the alert is correct to flag the behavior, but the behavior itself needs to be made safer, such as when administrators regularly perform high-risk actions without proper change tracking. Beginners often see tuning as optional, but tuning is part of monitoring governance, because it keeps alerts aligned with reality. Over time, tuning produces a small set of alerts that people trust, and that trust is what makes monitoring sustainable.

A S I E M is also valuable for detection that is not tied to single alerts, because it can support hunting and trend analysis. Hunting is the practice of searching for suspicious patterns that may not trigger alerts, such as slow credential misuse, subtle lateral movement, or low-and-slow data access. Trend analysis looks for changes over time, such as rising authentication failures, increasing use of privileged accounts, or unusual increases in access to sensitive systems. Beginners may assume monitoring is purely reactive, waiting for alerts, but mature monitoring includes proactive review to find issues before they become obvious. This is particularly important in payment environments, where attackers might try to stay hidden and where small anomalies can be early indicators of compromise. Hunting and trend analysis also help validate that controls are functioning, because a sudden drop in events might indicate log collection failure rather than improved security. When you use the S I E M for both alerting and investigation, you increase the chance of detecting sophisticated threats that do not announce themselves.

Monitoring effectiveness also depends on ensuring that critical systems are not only sending logs, but sending the right logs. For example, an authentication system that logs only successes and not failures will hide brute force attempts. A server that logs administrative actions without recording which account performed them will reduce accountability. An application that logs errors without capturing enough context will make it hard to distinguish attacks from bugs. Beginners sometimes assume log sources are naturally complete, but logging is often configurable and can be disabled or misconfigured. Effective monitoring includes periodic validation that logs are being generated, forwarded, and retained as intended, and that key event types are present. This validation is part of proving monitoring works, because a S I E M full of missing fields and gaps is a false comfort. When logging quality is maintained, alerts become more accurate and triage becomes faster because the evidence is already present.

Retention and protection of monitoring data are also essential because monitoring data is both useful and sensitive. Logs can reveal system structure, user identities, and patterns of behavior, which makes them valuable to defenders and attackers alike. Effective monitoring ensures logs are protected from tampering, access to logs is restricted, and retention periods are sufficient to support investigations that might start weeks after an intrusion begins. Beginners sometimes treat retention as a storage problem, but retention is a security design choice, because insufficient history limits your ability to detect slow attacks and to determine scope during incident response. Protection is equally important because an attacker who can erase or alter logs can hide their actions and delay discovery. A disciplined program sends logs to a protected central location and monitors for signs of log disruption, such as sudden stops in event flow. When retention and protection are handled well, the monitoring system becomes a trustworthy source of truth rather than a fragile convenience.

The human side of monitoring is where effectiveness is either sustained or lost, because people must be able to do the work day after day. Roles and responsibilities must be clear, including who reviews alerts, who performs deeper investigation, who has authority to take containment actions, and how escalation works. Communication must be predictable so that when a high-risk alert occurs, the right people are notified quickly with clear context. Beginners sometimes imagine monitoring is a single security analyst staring at screens, but real monitoring is an operational capability that depends on teamwork and clear processes. It also depends on learning, because every incident and every false positive can improve the program if lessons are captured and rules are refined. When monitoring is treated as a routine service with defined expectations, it becomes resilient to staff turnover and shifting priorities. This is part of why mature monitoring feels calm, because everyone knows what to do, and the system supports them with reliable signals.

As you bring all these ideas together, monitoring effectively with a S I E M, alerts, and triage becomes a disciplined approach to turning raw events into timely action. You begin by collecting the right signals from high-value systems and ensuring time and identity are consistent so correlation is trustworthy. You design alerts around high-impact risk and make them actionable with context, so attention is directed where it matters most. You build a triage process that prioritizes based on asset criticality and privilege level, allowing fast containment decisions without panic. You tune rules continuously to reduce false positives and to improve trust, and you use the S I E M for both alerting and proactive investigation through hunting and trend analysis. You validate that logging remains healthy and complete, and you protect and retain monitoring data so investigations can reconstruct truth even weeks later. Finally, you build clear roles and escalation paths so monitoring becomes a steady operational rhythm rather than a sporadic scramble. When those pieces operate together, the environment becomes harder for attackers to exploit quietly, and the organization gains the confidence that comes from visibility and disciplined response.